Things I've Made

On the front page, I highlight several of my snazziest projects. This page provides a more detailed look into some of the more obscure ones.

Writing and other creative pursuits

In no particular order:

Writing prompt generators

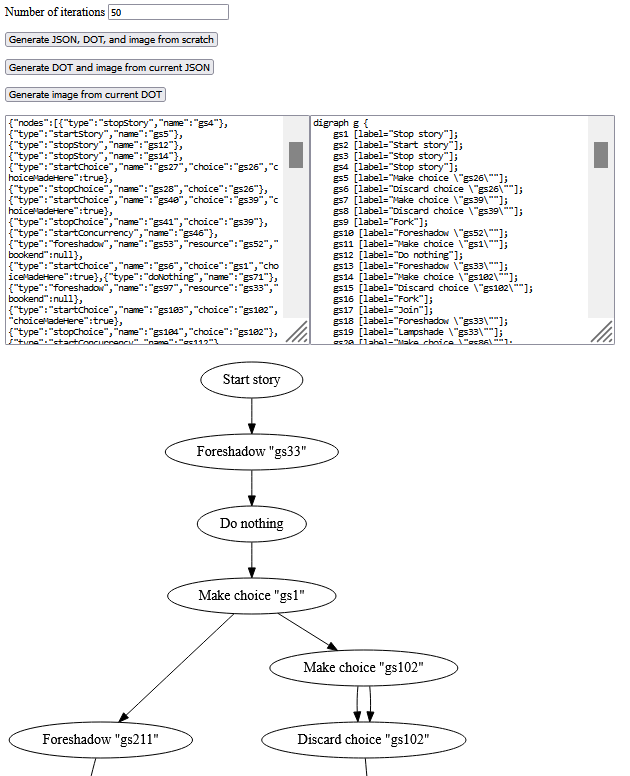

Some creative tools I've made to inspire writing ideas: A very simple prompt generator for scenarios where characters get into tomfoolery related to their unique talents, and an interactive plot structure generator based on graph rewriting.

Rinksprite

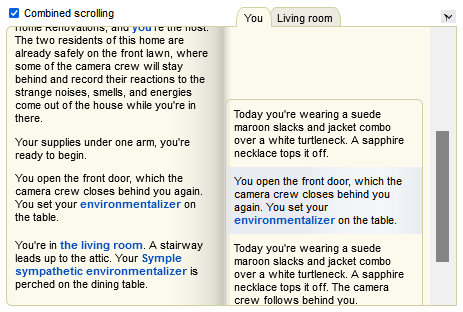

Symple Home Renovations

Magic makes home renovations easier than ever. Almost too easy.

Inspired by Seltani, I made a little text-based game engine (ContriverText) to try out some different UI ideas for multiplayer interactive hypertext fiction. The interface could use a lot of work, especially for a single-player demo like this one. However, for the Bring Out Your Dead game jam, I put together this short work to showcase the engine. There are some more author's notes on the jam submission page.

The Shape of Our Container

A little hypertext piece I made for a game jam on the theme of "tiny utopias."

Megalomaniac

A short-lived Mega Man sprite comic I started while I was really into Bob and George.

MyAI and Mikkamon

I could talk all day about some of my original characters and settings. Telling their stories in full is a daunting prospect, one that I might even need better programming languages for. :-p But I've occasionally written up some notes about MyAI and Mikkamon on GitHub to get the ideas out there somewhere.

Game modding and investigation

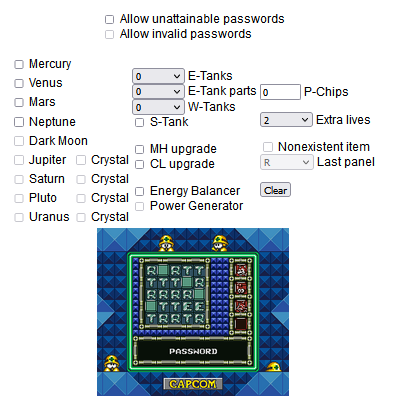

Mega Man IV and V password generators

Programming languages

My programming language ideas actually started long before I was able to bring them to fruition. I've basically been wanting to program in better languages as long as I've wanted to program at all. Here's a chronological account.

MyRegex

One of my first programming languages was a regular expression engine in Java. I didn't know the term "combinatorial parser" at the time, but I knew how I thought regular expressions worked, and I could model all those operations myself, so that's what I did. Thanks to this, I got the hang of programming in a monadic style (another term I didn't know at the time).

Jisp

Hot on the heels of MyRegex, I put together Jisp, a rudimentary general-purpose language based on an upgrade how I thought Lisp programs worked. The evaluation model I implemented for Jisp was very much an interpreter; not only did it have first-class macros in the fexpr sense (not that I knew the term "fexpr" at the time), but its macros processed textual code rather than s-expressions.

> (if (eq 'apple (cdr (cons 'pine 'apple))) 'success 'failure) result: success > something-we-havent-defined result: () > (function-we-havent-defined 'symbol) result: (() (quote symbol)) > "( this is a string ) result: "( this is a string )" > (doublequote so is this ) result: "so is this" > "and-this result: "and-this" > (if (eq 'string (doublequote string)) (exit) 'whoops) result: whoops > (if (eq "string (doublequote string)) (exit) 'whoops) [The REPL terminates.]

As I started to program in Groovy and Arc, I learned about Groovy's DSL-oriented design and Arc's Lisp-style macros. Programmers could build most of their code in their dream language and implement the details of that language in a library.

Framewarc

Framewarc is a collection of libraries I wrote for Arc, to indulge in the ability to write arbitrary language features.

The first thing I made in Framewarc was the module system. This allowed me to pursue various library ideas without feeling like I was using up global names that would be needed by other Arc programmers.

A few of the other things I made in Framewarc were multimethod systems, inspired largely by Inform 7's rulebooks and actions. I also put together a pattern-matching DSL and a framework for MyRegex-style backtracking computations.

Blade

I started to want to use the right tool for the job in a very fine-grained way. In my dream language, I could write parts of my code in Groovy, I figured, and I could write other parts in Arc or in DSLs like MyRegex. I especially wanted to reduce the effort it took to install and configure all these languages to work together.

So I started to build Blade, a language intended from the outset for polyglot programming. Blade programs could contain arbitrary text (e.g., text that was code in some other programming language). It was only when a paragraph began with certain punctuation that it would be recognized and processed as Blade code.

Like Jisp, Blade was based on syntaxes that took strings as input. However, Blade programs took this input at compile time, avoiding the main downside of Jisp's approach.

Since Blade code could be scattered opportunistically among other kinds of code, the order of Blade declarations with respect to each other was largely accidental. In order to allow them to be processed in an arbitrary order, I had objects called Leads that represented clues as to where more Blade code could be found and Calcs that represented computations in progress that could be blocked waiting for more information. Little did I know, these were basically monadic computation types, and I had implemented a concurrency trampoline.

Unfortunately, I was a stickler for indenting my code properly, and writing code in continuation-passing style created a lot of rightward drift that I had never experienced before. It was frustrating to work on Blade, and I felt like I'd made some kind of mistake.

Also, Blade didn't have a very good story for REPL-based programming. If I wanted to allow Blade code to be written out of order, exactly when during a REPL interaction would Blade actually run anything?

Penknife Mk. I

Having worked some more in Arc, I had a lot of ideas for improving upon Arc in terms of syntax and hygiene. I was also coming to terms with the fact that Blade didn't have a very good story for REPL-based programming the way that Arc did. If I wanted to allow Blade code to be written out of order, exactly when during a REPL interaction would Blade actually run anything?

So I decided that while Blade might be good for programming in a codebase-based workflow, a REPL-based workflow justified having a different kind of language. The two languages could be complementary.

And so I started building Penknife. Blade was a language for "freedom of declaration" (as in, writing declarations in whatever language one wanted), and Penknife was a language for "freedom of command."

One of the most remarkable features of Penknife Mk. I was the hygiene approach. Like Jisp and Blade, Penknife Mk. I had string-based syntax. However, this syntax was allowed to contain not only text but certain other objects. One of those was an object representing a change in environment. When a Penknife macro created a piece of generated code, this feature was used to swap out the caller's environment for a macro's definition-time environment for the purposes of that code, and it was used again to swap back to the caller's environment to process the macro's arguments.

A macro's definition-time environment was captured and bundled with the macro binding itself. This way, the generated code didn't actually have to embed a whole environment in it; it could just embed a relative path that navigated to the macro's environment from the caller's environment. Since the caller has to have the macro in their environment to call it in the first place, the generated code can count on the macro being there.

I still feel this separation between environments and expressions allows for a rather nice sweet spot for the kinds of environment mutation REPL users expect to be able to do. This remains one of my favorite approaches to hygiene.

Penknife Mk. I programs are very terse, not unlike Arc programs, and Arc code can be used to fill in when something isn't defined in Penknife:

[= fn-if [arc (fn (test then else)

(pk-call:if test then else))]]

[mac if [test then else]

qq.[fn-if \,test tf0.\,then tf0.\,else]]

[mac* do [] body

qq.[tf0 \,body]]

[mac* when [test] body

qq.[if \,test [do \,body] nil]]

[mac* unless [test] body

qq.[if \,test nil [do \,body]]]Kernelish

On Arc Forum, the topic of first-class macros came up in discussion many times. For the sake of discussion, I put together Kernelish, a simple language interpreter that implemented fexprs in the Kernel style.

Rainbow.js

Another thing that came up on Arc Forum a lot was a desire to run Arc programs in the browser. So I made Rainbow.js, a JavaScript port of the Arc programming language (specifically a port of Conan Dalton's implementation of it, Rainbow).

In the process of writing Rainbow.js, I learned that JavaScript was more capable of concurrency than I had imagined. I started to get the hang of continuation-passing style.

Lathe Comforts for JavaScript

At this point, I was committed to writing most of my code in JavaScript so that it could run in the browser. Rainbow.js was a project that I took on to get the hang of JavaScript. I started porting a lot of my library code from Arc to JavaScript. I even put together a macro system of sorts, Chops, and a (very slow) garbage collector for weak tables, Cairntaker, to support my efforts in the places JavaScript was lacking.

I was also learning about a lot of language design topics I'd never heard about before, like reactive programming and dependent type systems. I started exploring how to incorporate such ideas into my designs.

Underreact

Underreact is dataflow library for JavaScript that could form the underlying plumbing of a continuous-time functional reactive programming language.

It makes for some rather snazzy demos, including a demo of some state machines and a demo of a rudimentary platform game. [Warning: Some flickering.]

Era

Era is an project that serves as the seat of my most optimistic programming language ambitions. Era is the project that I pursue with the aim to simplify programming for everyone. I consider certain other languages I've worked on since then (Penknife Mk. II, Staccato, Cene Mk. I, and Cene Mk. II) to be components of the Era project.

Besides collecting and organizing my abstract goals for general-purpose programming language design, Era represents two ambitious but concrete goals: A generalized syntax based on collaborative hypertext documents, and a module system with a sound type system capable of serving as a knowledge representation format. I haven't made much progress on either of these goals directly, though they have served as guiding long-term goals in my other designs.

Penknife Mk. II

Eager to perfect at least some kind of syntax I could use for Era, I made a new incarnation of Penknife. Penknife Mk. II has weak opening parens (an idea I'd eventually factor out into the Parendown library for Racket) and a nestable quasiquotation syntax for strings, features I'd carry on into Staccato and Cene Mk. I.

Penknife Mk. II breaks from my usual habit from Jisp, Blade, and Penknife Mk. I of having macros take text as input. It embraces a more s-expression-based syntax, albeit one that has a sophisticated string literal syntax for situations where string-based syntax is needed.

Penknife Mk. II also experiments with features that could come in handy for JavaScript programming. One is simply that it compiles to the kind of world-passing and continuation-passing style that I had arrived at for working with flexible control of side effects in JS. The other is that Penknife values can implement custom behaviors for aliasing, which allows them to do reference counting for reliably timed resource teardown. (This covers territory similar to Rust's Drop, Copy, and Clone traits.)

I was also hoping the customizable aliasing would allow Penknife values to be used to build up dataflow pipelines like those of Underreact. However, I didn't come up with a good way to make it work with conditionals, and I found that allowing this custom aliasing behavior on every single variable in the language made the language rather slow, so I don't consider this part of the experiment to have fully panned out.

Staccato

With my mind on run-time debugging support and suspend/resume functionality, I put together Staccato, a language spun off of Penknife Mk. II that had a more fully specified format for call stacks.

With Staccato, I also got more into the habit of pure functional programming. I had a plans for managing pure computations, computations that had merely monotonic read-only side effects, computations that had monotonic read-write side effects, and computations with arbitrary side effects.

I also started to think in terms that were a bit more reflective. I wanted to support reactive programming, so I thought of variables not only as representing singular values but as representing whole communication channels. I started wanting to approach error-handlings in terms of communicating with an entity that represented the language runtime itself.

And once I wrote an ahead-of-time compiler from Staccato to JavaScript, I started to think about Staccato programs as networks of code that would eventually be crawled once it came time to translate them to another language.

At that point, predictable call stack representations were one of the least interesting parts of Staccato to me, so I spun off another language from it, Cene.

Cene Mk. I

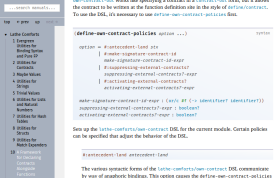

Cene Mk. I is a pure functional language with concurrent macroexpansion and a JavaScript FFI. I've built a text generation DSL in Cene, as well as a little screenplay-like DSL.

In many ways, Cene is a culmination of the languages before it. It directly carries on the syntax innovations of Penknife Mk. II and the approach to side effects and cross-compilation that Staccato arrived at. Cene's declarations are processed concurrently just like Blade's.

Cene Mk. II is the latest experimental work on Cene, rebuilding it with better error messages, a module system, and a few other refinements. At certain points in the design of Cene Mk. II's module system, I've considered the ideals of Era's ambitious module system to be within reach.

Lathe libraries for Racket

Cene Mk. I collected a lot of ideas into one language. It got to be hard to talk about one feature without first explaining all the others. So I started separating out some of the ideas into individual libraries for the language as close to Cene as I knew to exist: Racket.

First of all, I've separated out the weak opening parens from Penknife Mk. II into Parendown (repo | docs). Parendown has impacted the design of several other syntaxes I use in Racket, including the general-purpose utilities in Lathe Comforts for Racket (repo | docs) and the debug tracing utilities in Lathe Debugging (repo).

I've done a deeper investigation of the quasiquotation syntax and separated some of that out into Punctaffy (repo | docs). Along the way toward Punctaffy, I built out some category theory abstractions in Lathe Morphisms (repo | docs) and some ordinal arithmetic operations in Lathe Ordinals (repo | docs).

I've separated out some refinements of the Blade- and Staccato-style concurrency ideas into Interconfection (repo | partial docs). It's still rather unstable, and it's a big system in its own right. A lot of the Cene Mk. II codebase is basically just a wrapper over Interconfection.

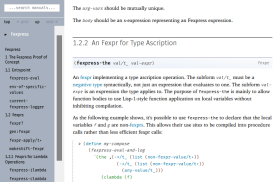

Fexpress

Fexpress is a proof-of-concept language core in which fexprs and compiled functions can coexist.

I originally tried to make Fexpress back when I was porting Rainbow.js, just to prove an fexpr-supporting language didn't have to be slow for programs that resembled lambda calculus programs. However, I didn't know enough to make progress on it at the time.

Now, after a particularly insightful week or so of coding, Fexpress is working. I'm surprised myself, and it's a little ironic because I'm not all that inclined to use fexprs in practice. However, I do think it occupies a certain place in the language design space that's worth accounting for.

Cronut

Although most of my serious attention to language design has been focused on Cene and the related Racket libraries, I have been considering approaches that are more casual or that do more to embrace and give back to the Racket ecosystem.

There are also certain new ideas I've had about cyclic module dependencies, parameterizable modules, first-class modules, the Expression Problem, multiple front-end syntax layers, facilitated convergent evolution of libraries, and the reporting of source locations and errors through multiple abstraction layers. I don't think Cene Mk. II is quite stable enough yet to pursue all these ideas there, so I've been considering exploring some of them more casually as Racket libraries.

Other

Rocketnia.com

The website you're looking at is written in a static site generator I made myself called Chopsgen. It uses the same kind of deterministic concurrency model that went into the Cene macroexpander.

I wrote this static site generator at a time when I wanted to be able to run all my code in the browser, even the build tools themselves. In a clone of the repo, the file html-build.html shows a preview of the site in an iframe, and it offers a button to download the processed .html files in a .zip archive, ready to be unpacked and uploaded. In practice, I actually do a more sophisticated kind of deployment, but it's nice to have this as a fallback.

- About Me

- Resume

- Things I've Made

- In-Browser Utilities

- Writing Hosted Here

- Me on the Web

© 2005–2010, 2013, 2022 Ross Angle (Rocketnia)

This page was last updated 13-Apr-2022.